Resignation of Mrinank Sharma from Anthropic and the Future of AI Safety

By Ipek Kara | 23 February 2026

Summary

The resignation of Mrinank Sharma from Anthropic signals growing state pressure on corporate AI safety structures, particularly as defence demands and geopolitical competition begin to override ethical safeguards.

Governments are increasingly prioritising strategic and military utility over effective governance, creating structural tensions between national security objectives and safety-first commitments within AI companies.

AI safety governance is shifting away from internal corporate departments toward state-driven control through political pressure, where safety becomes subordinated to strategic competition and weakens independent oversight.

Context

On 9 February 2026, Mrinank Sharma announced his resignation from his position as head of the Safeguards Research Team at Anthropic, an AI research company founded in 2021 by former OpenAI researchers with a mission to advance AI safety and alignment. Their Claude models are widely used in various fields all around the world.

His departure from the company carries symbolic meaning for the AI world, raising broader questions about safety. Whether these companies can uphold objective safety standards amid commercial and political pressures. In his resignation letter, Sharna shared his recent difficulties in letting his values govern his personal and organisational decisions, which became especially challenging under external pressure. It is important to remember that he is not the only AI safety figure to resign in the past couple of months.

Anthropic has recently been under pressure from the Pentagon to loosen restrictions on its models for military applications, including autonomous weapons and intelligence, arguing that military use for “all lawful purposes” should be permitted, including defence-related applications. Pressures escalated to discussions around blacklisting Anthropic as a “supply chain risk”, which emphasises the friction between ethical boundaries and political demands in AI safety.

In his letter, Sharma wrote that the “world is in peril,” and framed the current moment as one with interconnected crises not only limited to AI, but also including bioweapons, societal fractures, and other global risks. Media coverage suggests Sharma’s departure reflects internal tension between declared mission statements and daily practice, where safety principles can be challenged by product and commercial priorities.

Implications

The implications of Sharma’s resignation extend beyond internal corporate governance and reveal the structural limits of relying on private firms to manage risks with geopolitical consequences. While AI safety requires regulatory oversight, precision, and restraint, companies and some states, such as the US, are racing to develop powerful models as quickly as possible without proper safety baselines. The erosion of “safety-first” branding around innovation is shifting to be a revenue and leadership race with very little morals, led by American and Chinese innovation hubs, while deepening the ongoing tensions. If senior safety figures increasingly feel misaligned with leading organisations, companies will struggle to retain expertise for long-term risk mitigation and lose their influence over critical industries such as defence, bioweapons, and international crisis management.

For Transatlantic Governance and Global Stability

The fragmentation between AI safety and cooperation agendas was clearly observed during the 80th meeting of the UNGA during the related discussions. The US approach prioritises innovation and is not eager to create global binding standards. Although the EU has been the gatekeeper of security and safety of digitalisation and AI throughout the years, they went into loosening restrictions of the EU Cybersecurity Act in December 2025 in order to attract investments of tech companies and support the startup ecosystem.

Policy and ideological stepbacks around safety reflect the broad problem of how to reconcile ethical safeguards with political and economic interests. Alongside the ethical problems, current setbacks and pressures caused by governments demanding wider use of AI in defence may lead to increased risk of cyber and hybrid attacks due to increasing grey areas and lack of transparency around safety measures. AI safety, therefore, becomes important not only for misuse prevention but to ensure stability.

Regardless of the current conflicts, recent developments at the United Nations underscore this urgency. As highlighted during the 80th session of UNGA discussions on strengthening international AI governance mechanisms, member states increasingly recognise that voluntary corporate commitments alone are insufficient to address systemic risk. Multilateral dialogue is gradually shifting from principle-setting toward institutional coordination and oversight.

The establishment of IISP-AI earlier this month, with strong support -regardless of the US’s opposition- signals an emerging recognition that AI safety requires structured, international institutional frameworks capable of bridging technical expertise, policy coordination, and security considerations. While it is unknown for the moment if such an initiative can translate into governance architecture, its emergence with strong support reflects a growing awareness of AI safety’s strategic importance at the global level.

In this perspective, IISP-AI represents an early institutional response to the erosion of purely corporate safety governance. It signals recognition that AI safety must be embedded in structured, international mechanisms rather than left to internal ethics teams operating under commercial and political pressure, as Sharma was facing in Anthropic.

Forecast

Short-term (Now - 3 months)

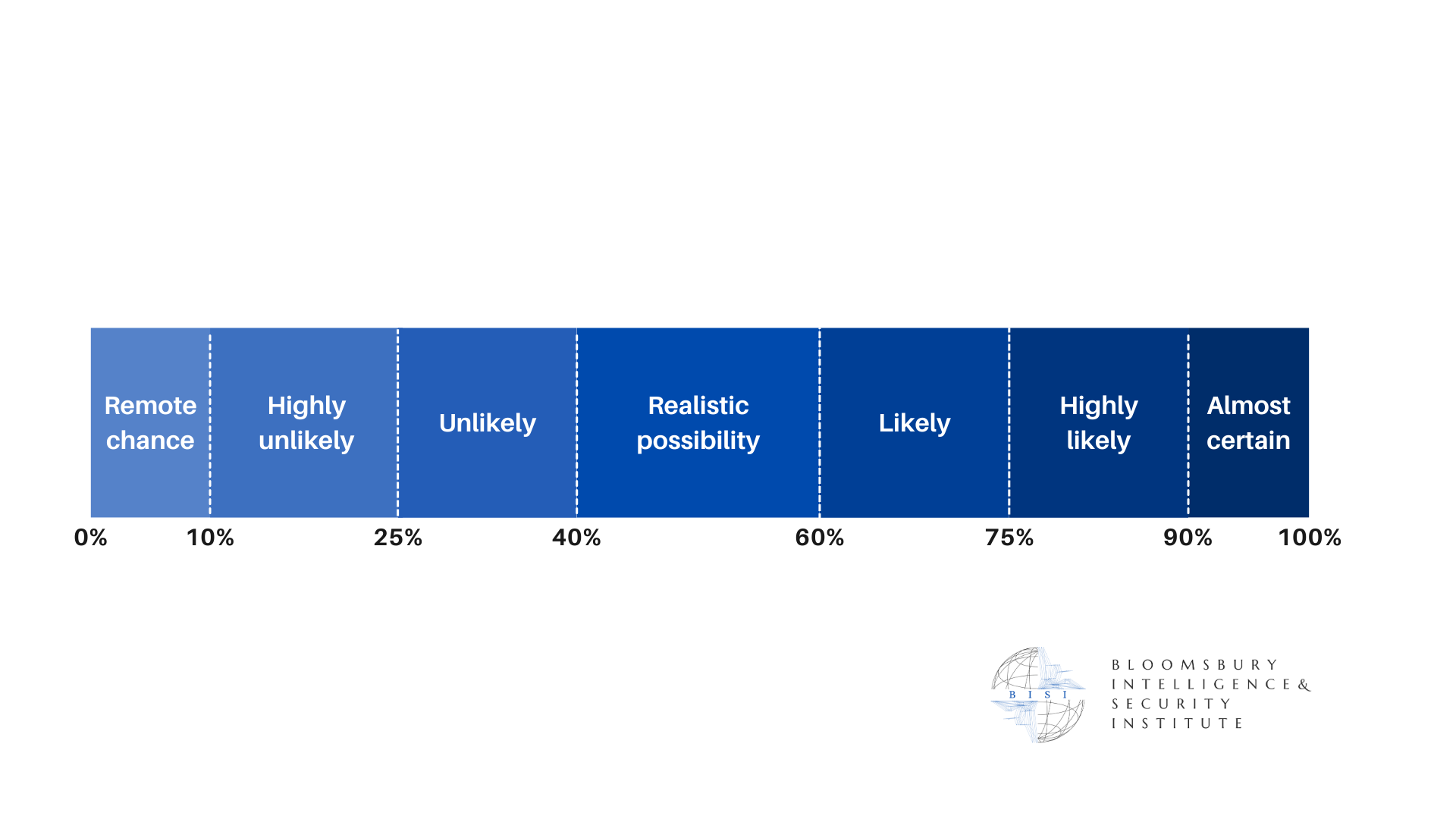

Following the resignation and pressure from the Pentagon, Anthropic is highly likely to respond with renewed commitments

Multilateral initiatives such as IISP-AI are likely to frame recent developments as evidence of the insufficiency of current corporate governance around AI safety, pushing for further international cooperation.

Medium-term (3 - 12 months)

Tensions between the US’s AI safety objectives and corporate safety are likely to intensify.

As AI integrate further into defence systems, AI governance is likely to be increasingly treated as a global security issue

Long-term (>1 year)

AI safety is highly likely to become embedded within national security and industrial policy frameworks. Dedicated AI safety units are highly likely to emerge within defence and strategic ministriesand reliance on corporate-led oversight while imposing further control.

Institutional competition is likely to become a defining feature of the AI safety landscape as the debate is likely to move towards the question of who controls the architecture of AI governance.