Claude Code Security and the Future of AI-Driven Cybersecurity

By Aryamehr Fattahi | 24 February 2026

Summary

Anthropic launched Claude Code Security on 20 February 2026, a reasoning-based vulnerability scanner that represents a qualitative shift from traditional rule-based static analysis. The announcement triggered a significant cybersecurity stock selloff.

The tool accelerates an emerging competitive dynamic in which Artificial Intelligence (AI) platform providers bundle security capabilities into existing developer products, compressing margins for incumbent vendors and reshaping the cybersecurity value chain.

In the medium term, AI-native security tools are likely to become a standard layer in the software development lifecycle rather than a replacement for established cybersecurity platforms. Structural disruption is most probable in the static analysis and application security segments, while endpoint detection, identity management, and runtime security are likely to remain distinct disciplines.

Context

On 20 February 2026, Anthropic announced Claude Code Security, a new capability embedded in its Claude Code platform, available as a limited research preview for Enterprise and Team customers. The tool scans codebases for security vulnerabilities and suggests targeted software patches for human review. It is built on Claude Opus 4.6, Anthropic's most advanced model. According to Anthropic, Opus 4.6 identified over 500 previously unknown high-severity vulnerabilities in production open-source codebases during internal testing, including flaws that had evaded detection for decades.

Claude Code Security differs from conventional static analysis tools in its core methodology. Where traditional tools match code against known vulnerability patterns, Claude Code Security reasons about code contextually: Tracing data flows, mapping component interactions, and identifying complex vulnerabilities such as broken access control and business logic flaws. Every finding passes through a multi-stage verification process in which the model re-examines its own conclusions, assigns severity ratings and confidence scores, and filters false positives. No fix is applied without explicit human approval.

The market reaction was immediate. Cybersecurity stocks fell broadly on 20 February, with losses deepening by the 24 February as CrowdStrike and Zscaler each dropped an additional 10%. Pure-play code scanning vendors were hit hardest; JFrog fell 25% and GitLab 8%. CrowdStrike Co-Founder and Chief Executive Officer George Kurtz responded on social media, prompting Claude itself to confirm it does not replicate CrowdStrike's Falcon platform and competes more directly with static analysis vendors.

Anthropic is not the first mover in AI-native security. OpenAI launched Aardvark, a GPT-5-powered autonomous security agent, in October 2025. Additionally, Microsoft and Amazon have deployed internal AI agents for vulnerability remediation and Google has developed AI-powered code scanning tools.

Implications

With the factual landscape established, the following sections examine the strategic implications of Claude Code Security across markets, industry structure, enterprise adoption, open-source security, and the regulatory environment.

The Stock Selloff: Signal and Noise

The scale of the selloff reveals more about investor positioning than about Claude Code Security's immediate commercial threat. Most affected companies operate primarily in endpoint detection, identity management, and network security domains that Claude Code Security does not directly address. The selloff was likely amplified by elevated valuations. Bank of America noted the tool poses a significant threat only to dedicated code scanning platforms. The most realistic reading is that the selloff was disproportionate in the short term but signals a genuine structural repricing of disruption risk. The underlying concern is credible: AI platform providers with distribution to millions of developers can bundle security at marginal cost, compressing the pricing power of standalone vendors.

The Dual-Use Dilemma

Anthropic's rationale for releasing Claude Code Security is defensive: To give security teams the same frontier-level capabilities that adversaries will soon wield. This framing is strategically sound but does not resolve the underlying tension. The same reasoning that discovers vulnerabilities can, in principle, be used via the Application Programming Interface (API) to identify exploitable flaws in target systems. The dual-use problem is not new to cybersecurity, but AI changes the economics. Traditional vulnerability research requires scarce expertise; AI-powered scanning democratises that capability, lowering the barrier for both defenders and attackers simultaneously. Anthropic's approach of restricting the preview and requiring customers to scan only code they own is a reasonable initial safeguard. However, competing tools from OpenAI, Google, and others are pursuing similar functionality, and the window in which controlled release provides a meaningful defensive advantage is almost certain to narrow.

Implications for the Cybersecurity Vendor Ecosystem

The structural threat from AI-native security tools is uneven. Pure-play static analysis and application security testing vendors face the most direct competitive pressure. For platform vendors such as CrowdStrike, Palo Alto Networks, and Zscaler, the threat is more indirect. Kurtz's argument that Claude Code Security and Falcon operate at different points in the security lifecycle, pre-deployment scanning versus runtime detection, is technically accurate. However, it understates the risk that AI platforms could progressively expand into adjacent functions using their distribution advantage. It is a realistic possibility that within 12 to 18 months, AI-native security products will cover a broader portion of the security lifecycle, intensifying overlap with incumbents. The deeper dynamic is value redistribution: The significance is not that AI can find vulnerabilities, but that it can now reason well enough to suggest credible fixes, shifting value from detection toward remediation and end-to-end workflow orchestration.

Enterprise Adoption Challenges

For enterprise security teams, Claude Code Security introduces evident capability alongside significant adoption friction. Industry surveys suggest formal governance frameworks for reasoning-based scanning tools remain the exception, with many Chief Information Security Officers (CISOs) not anticipating the capability would arrive this early in 2026. False positive rates remain a critical concern; Claude Code Security's multi-stage self-verification represents an improvement, but no AI system eliminates false positives. This is particularly relevant since these tools currently remain most effective at finding lower-impact bugs, with human operators still needed for higher-level threats. Data residency is another friction point: Sending proprietary source code to an external AI model raises intellectual property and compliance concerns, particularly under the European Union (EU) AI Act. Questions around code persistence and data handling policies will need clear answers before risk-averse enterprises adopt at scale.

Open-Source Security and the "Vibe Coding" Feedback Loop

Anthropic's offer of expedited access for open-source maintainers is perhaps the most consequential element from a systemic security perspective. Open-source software underpins modern applications, yet maintainer capacity is chronically constrained. If AI-powered scanning becomes widely available, it is highly likely to materially improve the baseline security of critical supply chains. However, the window between AI-assisted discovery and patch adoption is precisely where exploitation risk is highest, and smaller projects may lack the capacity to triage at the pace AI tools can discover flaws.

The proliferation of AI-generated code, colloquially termed "vibe coding", creates a self-reinforcing demand cycle for AI-powered security review. Studies consistently find that a significant proportion of AI-generated code contains security flaws, with some estimates suggesting vulnerability rates several times higher than human-written code. Tools like Claude Code Security are, in part, a response to a problem the AI industry itself has created: Code is being generated faster than humans can secure it. Organisations that embed automated security review into their development pipelines are likely to gain a measurable security advantage.

Forecast

Short-term (Now - 3 months)

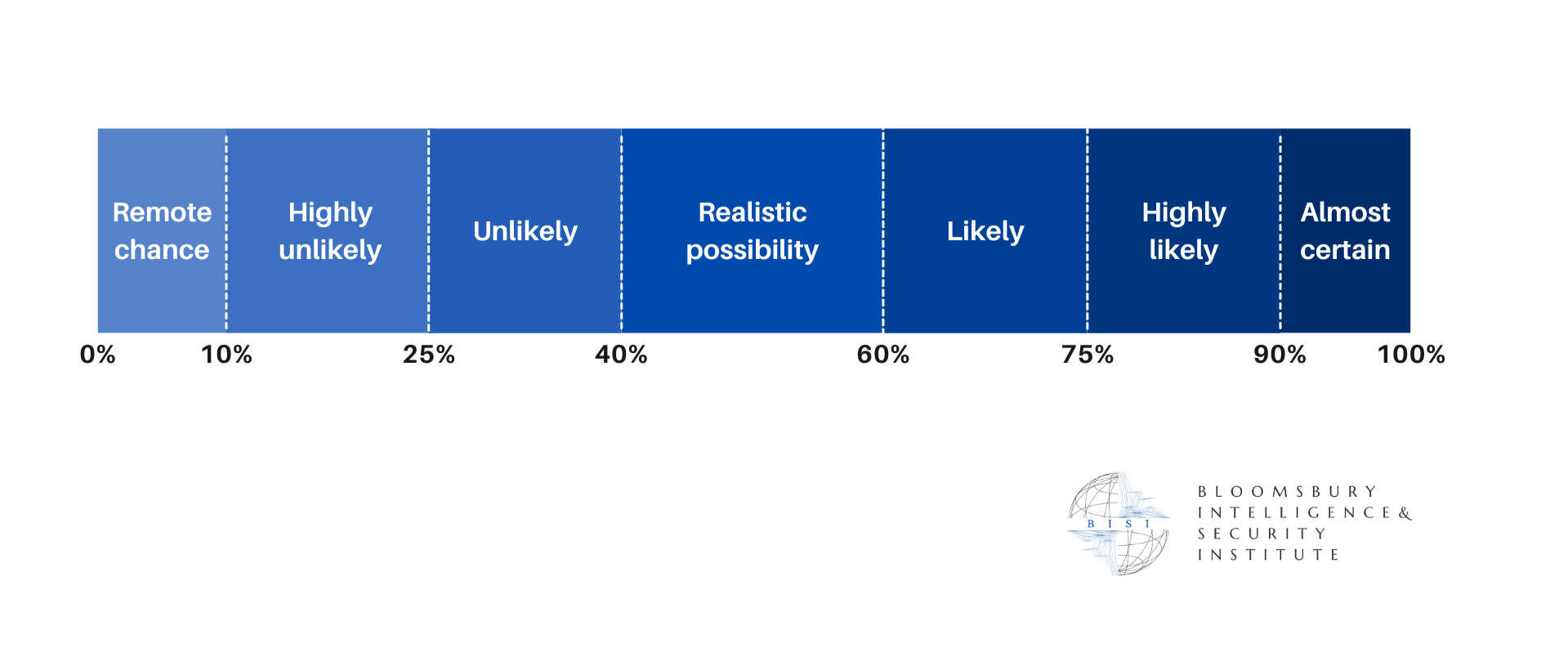

It is highly likely that the cybersecurity stock selloff will partially reverse as investors distinguish between vendors directly exposed to code security competition and those in distinct segments.

Medium-term (3 - 12 months)

It is likely that OpenAI, Google, and Microsoft will accelerate AI-native security offerings, intensifying competition in code-level scanning and remediation. It is a realistic possibility that 1 or more cybersecurity incumbents will announce strategic partnerships or acquisitions in the AI-native security space.

Long-term (>1 year)

It is highly likely that AI-powered vulnerability scanning will become standard in enterprise development workflows, with majority adoption among large organisations by late 2027. Structural margin compression is likely for pure-play static analysis vendors, and it is a realistic possibility that blurring boundaries between code and runtime security will drive a wave of sector consolidation.