When Voice Is No Longer Proof: AI Vocal Cloning and the Limits of Voice-Based Authentication

By Hannah-Rose Shearman | 2 March 2026

BISI is proud to present this piece in collaboration with CyberWomen Groups CIC. Through this partnership, we have combined our expertise in political risk with their knowledge of cyber security to deliver a fresh perspective on emerging threats.

CyberWomen Groups CIC is a student-led initiative dedicated to diversifying STEM by supporting and connecting university students interested in or studying cybersecurity, regardless of gender identity.

Summary

AI vocal cloning poses a significant risk to the security of voice-based authentication systems widely deployed in high-risk sectors such as finance and public services.

The increasing accessibility and realism of synthetic voice technology undermine the effectiveness of vocal biometrics, thereby increasing fraud exposure and operational strain for organisations that rely on voice verification.

Continued exploitation of voice-cloning capabilities is driving a shift away from voice as a trusted primary authentication factor, reshaping the future risk profile of digital identity systems.

Context

Vocal biometric authentication is widely deployed across financial services and government organisations for remote identity verification, including voice identification systems used by institutions such as HM Revenue & Customs (HMRC) and major retail banks. It has been regarded as both secure and accessible, offering an alternative to passwords and physical authentication mechanisms, and enabling access to services for individuals with lower levels of digital literacy or complex accessibility needs.

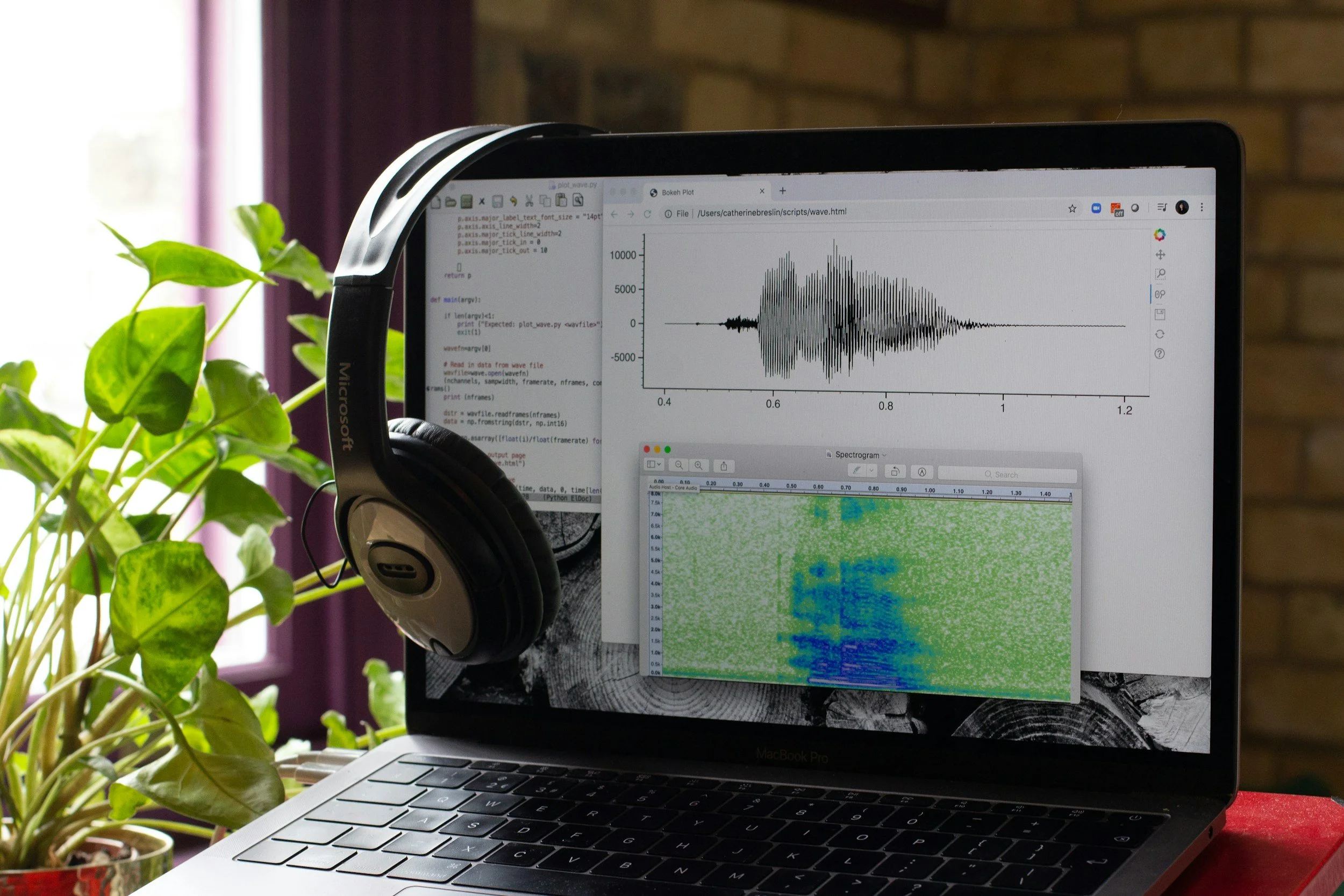

However, recent advances in AI-enabled voice synthesis and cloning have fundamentally altered the threat landscape in which these systems operate. Academic and industry research has demonstrated that modern voice cloning tools can replicate vocal characteristics with high fidelity and bypass traditional authentication controls. Techniques that were previously resource-intensive and difficult to scale can now be performed with minimal technical expertise, owing to the increasing accessibility of commercial voice synthesis platforms such as Elevenlabs, thereby weakening long-standing assumptions about the uniqueness and reliability of voice as a standalone proof of identity. This risk is further amplified by the widespread availability of voice data through social media platforms and other public digital spaces, where audio and video content is frequently shared with limited awareness of its potential misuse. Together, these developments expose voice-based authentication systems to new forms of impersonation and fraud that were not anticipated when they were adopted.

Implications

The increasing feasibility of AI-enabled voice cloning reshapes the political, operational, security, and economic risk landscape for institutions that rely on voice-based authentication.

From a security perspective, vocal cloning undermines the assumption that voice functions as a reliable biometric identifier. Unlike passwords or physical tokens, voice data is frequently and voluntarily exposed through public platforms, making it difficult to control or revoke once compromised. As synthetic speech becomes increasingly indistinguishable from that of legitimate users, institutions face an elevated risk of impersonation attacks that bypass existing authentication controls, particularly in automated, remote, or call centre-based interactions.

Operationally, the reduced technical and financial barriers associated with AI voice cloning enable a broader range of actors to engage in voice-based fraud. This shift increases the volume of attempted attacks, even when individual attempts lack sophistication. Higher attack frequency places sustained pressure on fraud detection teams, call centre staff, and incident response processes. At the same time, increased authentication challenges and verification failures contribute to higher rates of false positives, disrupting legitimate access and eroding user confidence in voice-based systems.

There are also significant economic implications. Elevated fraud activity results in direct financial losses and increased costs for investigation, remediation, and customer support. Over time, these operational and financial pressures diminish the efficiency gains that originally motivated the adoption of vocal biometrics, such as reduced handling times and streamlined customer verification.

At a political and regulatory level, the growing divergence between institutional authentication practices and emerging AI capabilities exposes gaps in consumer protection and accountability frameworks. Key stakeholders include financial institutions, government bodies, regulators, and end users. While organisations continue to deploy voice biometrics as a trusted control, victims of AI-enabled impersonation encounter limited avenues for redress in jurisdictions where legal frameworks have not yet adapted to address synthetic voice misuse.

For end users, the erosion of trust in voice-based authentication systems carries broader societal implications. Individuals who depend on voice authentication for accessibility reasons face disproportionate disruption as institutions introduce additional controls or restrict access. Collectively, these dynamics indicate that AI vocal cloning constitutes not only a technical challenge but also a systemic risk that affects institutional trust, service accessibility, and the resilience of digital identity systems.

Forecast

Short-term (Now - 3 months)

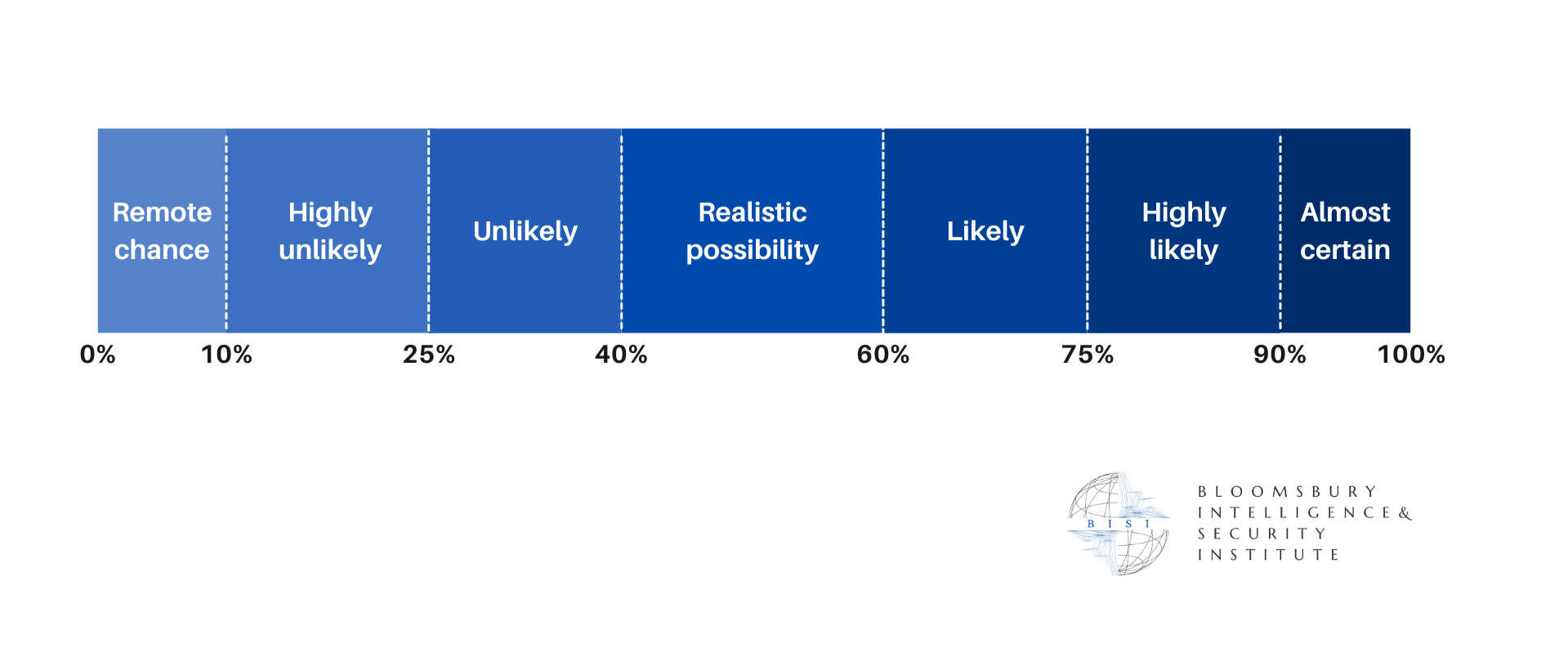

AI voice cloning will likely enable scalable impersonation attacks against voice-authenticated services, increasing exposure to targeted financial fraud and unauthorised account access.

Medium-term (3 - 12 months)

As barriers to entry continue to fall, AI voice cloning is very likely to drive higher volumes of impersonation attempts, placing sustained operational and financial strain on institutions reliant on voice verification.

Long-term (>1 year)

Continued reliance on voice biometrics is likely to accelerate the erosion of trust in voice as a primary identity control, weakening the resilience of voice-based authentication frameworks.