US AI Framework and the Politics of Deregulation

By Martyna Chmura | 14 April 2026

Summary

President Donald Trump’s National Policy Artificial Intelligence (AI) Framework prioritises deregulation and federal pre-emption, aiming to remove barriers to innovation while centralising authority.

The absence of binding rules and ongoing legislative conflict between federal and state actors is creating regulatory uncertainty for organisations and slowing coherent governance.

This approach risks reinforcing industry power and delaying accountability, making the United States (US) AI regulatory environment increasingly fragmented and politically contested.

Context

On 20 March 2026, the Trump Administration released its National Policy Framework for Artificial Intelligence, outlining priorities including innovation, free speech, national security, and workforce development. The framework calls on Congress to remove “unnecessary barriers” to AI development and prevent a “patchwork” of state-level regulation, arguing that a unified national approach is necessary to maintain US competitiveness.

However, the framework introduces no binding obligations or enforcement mechanisms. Instead, it repeatedly states what “Congress should” do, leaving implementation uncertain. Legal analysis suggests the policy seeks to pre-empt state regulation without establishing a clear federal alternative, creating ambiguity around future compliance requirements.

Political responses have been immediate; it remains unclear whether the framework’s recommendations will be enacted. Republican House leaders have signalled support for advancing the administration’s priorities, and Sen. Marsha Blackburn has introduced the TRUMP AMERICA AI Act to establish a national standard. At the same time, bipartisan opposition to the moratorium on AI regulation has emerged in Congress. On March 20, Democratic lawmakers introduced the GUARDRAILS Act to block President Trump’s December 2025 executive order from taking effect and preserve states’ authority to adopt AI safeguards. Meanwhile, states have continued to legislate, making AI governance a broader contest over federalism, consumer protection, and the allocation of regulatory authority in the US AI market.

Implications

The Trump Administration’s Framework is built around a clear policy choice: federal pre-emption and lighter regulation are presented as conditions for faster innovation, stronger competitiveness, and national leadership in AI. The March 2026 document asks Congress to pre-empt “cumbersome” state AI laws, avoid creating a new AI regulator and rely largely on sector-specific rules and industry-led standards instead. It also combines limited safeguards in areas such as child safety, fraud, copyright and energy costs, with a broader effort to reduce what the administration sees as compliance burdens on developers and deployers.

The administration’s approach has not resolved regulatory fragmentation. The White House argues that a “patchwork” of state AI laws could undermine US competitiveness and has explicitly called for federal pre-emption of “cumbersome” state rules. Yet the March framework remains non-binding, and the December 2025 executive order “Ensuring a National Policy Framework for Artificial Intelligence” did not itself invalidate any state law. Instead, it ordered a 90-day federal review of existing and proposed state AI laws and created a Justice Department task force to challenge those deemed “onerous and excessive”. With that deadline having passed in mid-March without a public Commerce Department report, firms are left facing the prospect of future federal intervention without clarity on which state measures may be targeted first. Rather than simplifying compliance, the result is a more unsettled regulatory environment.

That uncertainty is especially significant because states continue to legislate. California’s 30 March executive order N-5-26 imposed new contracting safeguards on AI vendors, including expectations around bias, child safety, surveillance, and unlawful discrimination, thereby diverging from the administration’s more deregulatory posture. More broadly, states have advanced measures on deepfakes, child safety, transparency, discrimination, and incident reporting, with more than 100 AI-related state laws reportedly already enacted or newly adopted. What is emerging, therefore, is not merely a jurisdictional dispute between federal and state authorities but a broader contest over who should define acceptable risk in the US AI market. In effect, states are advancing the view that innovation without enforceable safeguards is politically difficult to sustain, while the administration has suggested that such safeguards may themselves impede innovation.

From a governance perspective, the framework raises interlinked concerns. First, consumer protection remains partial as the document acknowledges public concerns but does not establish concrete national obligations on auditing, testing, disclosure, or liability. Second, legal uncertainty persists for firms operating across jurisdictions where state rules remain active but may later face federal challenge. Third, it sharpens the question of federalism in digital governance, namely, whether states should retain authority to regulate technologies whose effects are already being felt in schools, labour markets, procurement systems, and public services.

For companies, this may reduce formal regulatory pressure in theory while increasing strategic uncertainty in practice. Large firms are better placed to absorb ambiguity, manage multi-state compliance and shape future rulemaking. Smaller firms, public-sector deployers and downstream users are less able to do so. In that respect, pre-emption without a clear replacement framework may not yield a more efficient regulatory environment; it may instead deepen existing asymmetries in scale, legal capacity and market power.

Forecast

Short-term (Now - 3 months)

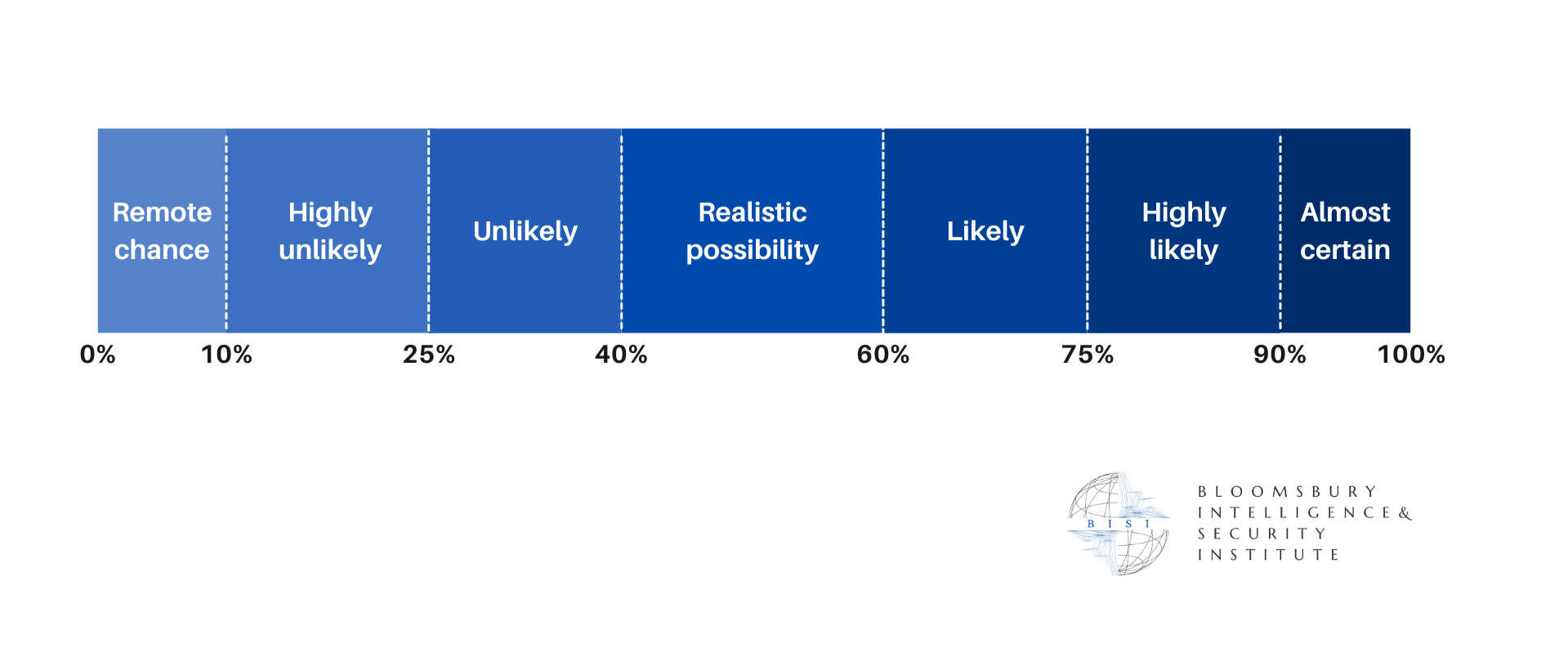

The absence of the Commerce Department’s review of state AI laws will likely prolong regulatory ambiguity, with firms maintaining compliance with existing state rules while monitoring signals of potential federal challenges.

Continued state-level action, particularly in large markets such as California, is highly likely to sustain a fragmented regulatory environment, limiting the immediate effect of federal pre-emption efforts.

Medium-term (3 - 12 months)

Congressional attempts to translate the framework into legislation will likely face resistance, with proposals to pre-empt state AI laws or establish a national standard encountering bipartisan constraints.

Legal challenges between federal and state authorities are a realistic possibility, shaping the balance of power in AI governance and increasing compliance complexity for multi-state operators

Long-term (>1 year)

A hybrid regulatory model combining partial federal coordination with active state-level governance is likely to persist, requiring organisations to manage overlapping and evolving compliance obligations.

Market dynamics are likely to favour large firms with the capacity to navigate regulatory fragmentation, while smaller providers may face higher barriers to entry as governance requirements and legal uncertainty increase.