OpenAI-DoW Deal: Institutionalising AI Governance Inside US National Security

By Ipek Kara | 4 March 2026

Summary

On 27 February, the United States’ Department of War (DoW) finalised an agreement with OpenAI to integrate their models into classified networks.

This agreement was finalised right after the designation of Anthropic as a “supply chain risk” by the Pentagon, ceasing their previous agreements and funding.

The agreement signals a structural shift in AI governance from corporate-supported safety guidelines to state-integrated oversight, embedding AI directly within the US national security infrastructure and decreasing outside intervention over governance principles.

Context

On 28 February 2026, the CEO of OpenAI, Sam Altman, announced the latest agreement with DoW for the deployment of the GPT 4.1 system into classified networks of the department. This followed the federal government’s classification of Anthropic as a “supply chain risk” after the company refused to remove safety restrictions around certain military applications of their AI systems. Significantly, OpenAI’s classified deployment in turn raises questions about transparency and the evolving balance between private innovation and state power as political alignment increasingly becomes a determinant of market access.

Implications

The divergence between the two companies does not lie in the security principles themselves, but in how those principles get operationalised under federal pressure. Anthropic had contractual prohibitions in its agreements with DoW, reportedly including restrictions against mass domestic surveillance of US citizens, and fully automatic lethal weapons. Anthropic retained a veto authority over how its systems can be deployed through these non-negotiables, even in situations the US government considered to be lawful.

OpenAI’s framework is structurally different. While stating their commitment to responsible deployment, it situated safety enforcement within institutional mechanisms of the existing internal DoW supervision structures and compliance with US law, therefore having less strict restrictions around DoW’s actions.

This change alone does not necessarily weaken safeguards on the integration of AI. However, it does relocate the governance authority. The central question thus shifts to whether the existing state oversight mechanisms are sufficient to effectively monitor deployment. A critical way through which OpenAI will be involved in oversight mechanisms is through the deployment of Forward Deployed Engineers (FDEs) within DoW for overseeing the systems, retaining influence through technical proximity.

In public remarks following the announcement, Altman also indicated that the safety conditions governing OpenAI tools’ deployment should apply uniformly to all companies working with the DoW, becoming a proposed baseline standard for federal agreements. Although this could create a unified system, it could also reduce the space for alternative governance models and limit the market access of politically non-aligned companies.

Moreover, the sudden designation of Anthropic as a “supply chain risk” after disagreement on safety measures with DoW will affect how other AI firms will approach federal partnerships and change their frameworks. Historically, the designation of a company as a “supply chain risk” has been reserved and only used for foreign adversaries, such as in the case of Huawei. Therefore, this case lays out the severity of the political alignment factor in order to stay in the market.

Forecast

Short-term (Now - 3 months)

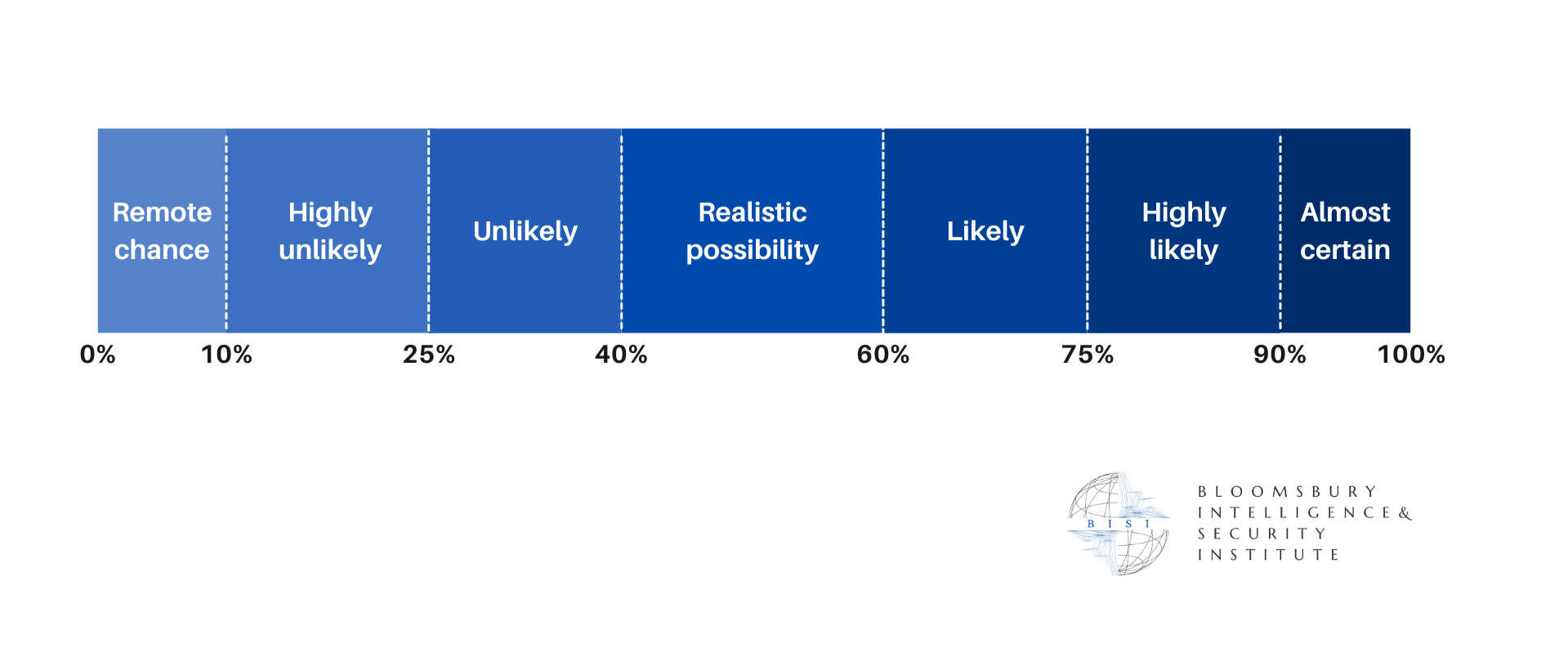

Anthropic is highly likely to file for as immediate stay against the “Supply Chain Risk” designation, triggering a constitutional test for the Defense Production Act’s power over private safety protocols’

It is highly likely that OpenAI will be mobilising the first cohort of FDEs to be embedded within the “Stargate” compute cluster of the DoW by May 2026 to start the classified migration process.

Medium-term (3 - 12 months)

A “Defense AI Oligarchy” is likely to emerge in the case DoW mandates the “OpenAI Standard” for all contracting companies, therefore gradually sidelining most firms with independent safety boards and devalue politically neutral AI startups.

Long-term (>1 year)

The AI systems integrated into defence are highly likely to transition from being tools to core components of national security infrastructure, which has a remote chance of raising structural risk in the case of excessive dependency on a small number of private providers (e.g., OpenAI and Amazon), or unlawful and early integration of AI into surveillance and weapon systems.

The integration of OpenAI systems into US defence infrastructure is likely to influence standard-setting within NATO and encourage allied states to adopt AI governance and safety frameworks adjacent to the US procurement models. While this is likely to accelerate technological cohesion within the alliance, it would simultaneously increase dependency on the US-based AI providers and narrow the space for European AI startups and diverging governance frameworks.