North Korea Uses AI-Generated Workers to Infiltrate European Firms

By Martyna Chmura | 1 April 2026

Summary

North Korea has developed a state-backed “fake worker” industry that uses stolen identities, generative artificial intelligence (AI), and support networks such as laptop farms to secure remote IT roles, evade sanctions, and generate hard currency for the regime.

After infiltrating more than 300 US companies between 2020 and 2024, this model is now spreading into Europe, where recruitment and remote‑work processes have not been treated as national‑security exposure points.

AI‑enabled labour infiltration could become a persistent tool of sanctions evasion and cyber‑espionage, forcing European regulators and firms to treat workforce authentication, supply‑chain due diligence and AI‑driven identity fraud as strategic risks rather than purely HR issues.

Context

North Korean IT-worker operations have evolved from an illicit fundraising mechanism into a hybrid threat that spans fraud, cyber intrusion, and sanctions evasion. US law-enforcement actions found that operatives posing as remote developers infiltrated more than 300 US companies, using stolen or fabricated identities, shell entities, and laptop farms to obtain employment and channel at least 6.8 million dollars back to Pyongyang.

Building on that model, North Korean units are now targeting European employers in Germany and Portugal and the UK by posing as software engineers, DevOps specialists and AI or machine‑learning contractors working fully remotely. Their approach combines traditional identity theft, that is hijacking or buying access to dormant professional profiles, with AI‑enabled deception: large language models to generate convincing CVs and “localised” cover letters, and deepfake video filters or AI avatars to pass remote interviews. These “laptop farms” in third countries effectively enable operatives to intercept corporate hardware, log in via VPNs, and quietly work multiple jobs under different identities, while routing a significant share of salaries back to the regime.

Implications

At the core of this development is a shift in where states can exploit digital vulnerability. North Korea’s fake-worker model does not rely on breaching a firewall at the outset; it relies on being invited inside through routine corporate processes. Most firms still treat hiring as an administrative or compliance function, whereas in this case, it is serving as the access vector. Generative AI, used by North Korean entities to research jobs and draft cover letters, increases the effectiveness of that approach by reducing many of the indicators that previously exposed fraudulent applicants. Convincing CVs, culturally plausible written communication, and consistent interview performance can now be produced at scale, making deception both more credible and more repeatable.

The security implications extend well beyond illicit wage generation. Once placed inside a company, a fraudulent employee may obtain privileged access to devices, credentials, internal documentation, source code repositories, cloud infrastructure, and collaboration systems. In at least one case cited by the US Department of Justice, a North Korean remote worker scheme resulted in unauthorised access to International Traffic in Arms Regulations (ITAR)‑controlled technical data at a US defence contractor, illustrating how this model can move from payroll fraud to sensitive system access. That changes the nature of the threat from financial deception to insider-enabled compromise. Such access can support espionage, intellectual-property theft, data exfiltration, extortion, or the insertion of malicious code into development environments. The risk is particularly acute in AI and machine-learning roles, where access may include training data, model-development workflows, safety mechanisms, or infrastructure with wider strategic and commercial value. In that sense, the model provides Pyongyang with more than revenue since it offers proximity to sensitive technical ecosystems inside foreign firms.

For Europe, the issue also highlights a structural weakness in current sanctions and compliance frameworks. Existing measures against North Korea are primarily designed to restrict financial flows, trade, and technology transfer. They are less well adapted to a model in which a sanctioned state extracts value through apparently legitimate labour contracts mediated by remote-work platforms, intermediaries, and cross-border payment channels. As a result, the labour market has become a relatively under-protected route for sanctions evasion and technical access. The expansion of these operations into European jurisdictions suggests that this gap is no longer theoretical. It reflects a broader mismatch between the way states regulate economic coercion and the way hostile actors now exploit digitally mediated work.

More broadly, this is a governance problem created by AI-enabled convergence. Recruitment fraud, insider risk, sanctions evasion, and supply-chain compromise increasingly sit on the same attack surface. The likely policy response will be a shift from static pre-employment checks towards continuous workforce authentication, tighter control over device shipping and provenance, and closer integration among HR, legal, compliance and security teams. That shift is already implicit in threat guidance from the FBI and in industry reporting from Google, recommending stronger identity verification, device controls and post‑employment access management.

Forecast

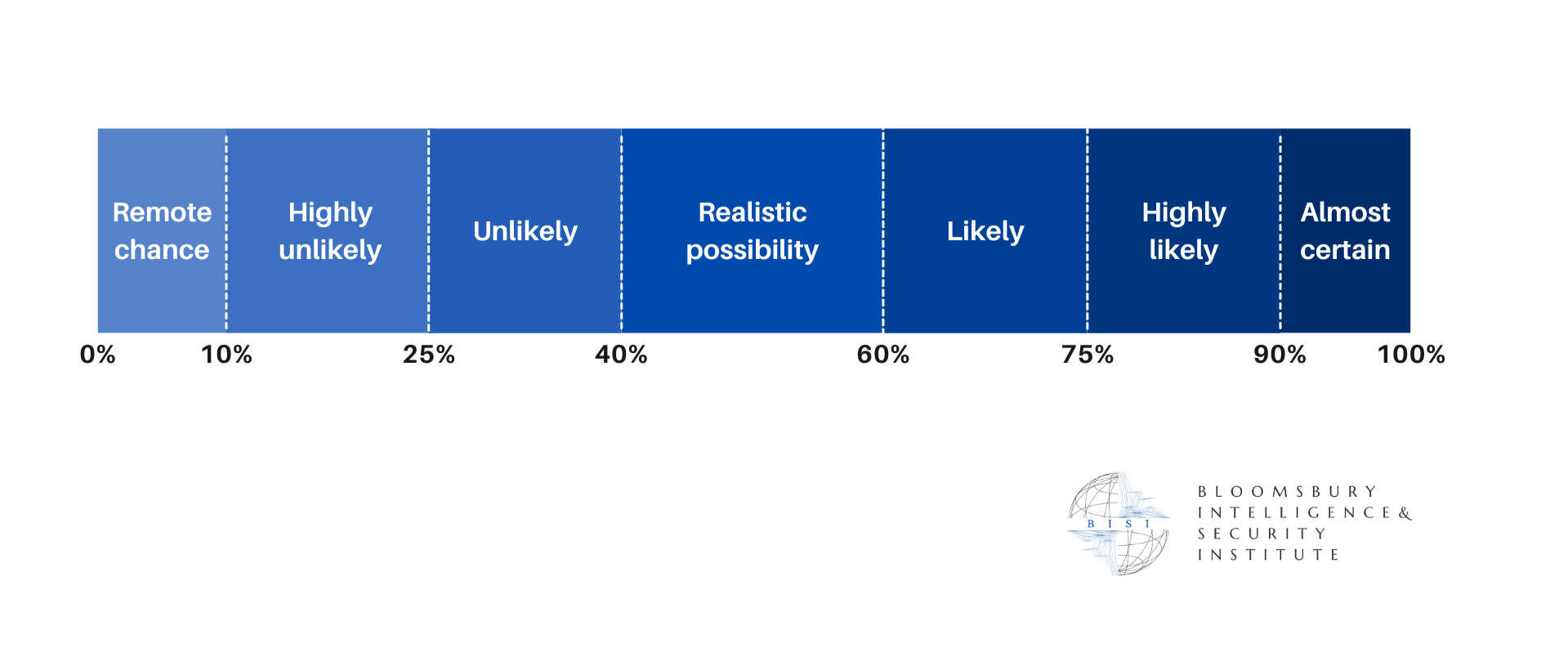

Short-term (Now - 3 months)

More Europe-linked cases are likely to surface, especially in software, cloud, cybersecurity, and contractor-heavy sectors, as defenders start looking for indicators already documented by the DOJ, FBI, and Google.

Medium-term (3 - 12 months)

It is likely that European regulators will start to integrate workforce authentication and contractor due diligence into financial‑sanctions and cyber‑security guidance, encouraging or requiring stronger identity verification for remote technical roles and tighter controls on equipment shipping.

Long-term (>1 year)

There is a realistic possibility that AI-enabled labour infiltration will become a standard tactic used by other hostile or sanctioned actors, leading to a more securitised hiring model in critical sectors and a further collapse of the old boundaries between cyber risk, insider threat, fraud, and compliance.

It is a realistic possibility that, by the end of the decade, European firms in critical sectors will be subject to explicit legal duties to detect and report suspected state‑linked “fake workers”, with failures treated similarly to lapses in anti‑money‑laundering or sanctions screening.