Meta’s Multibillion-Dollar Deal with Google: A Strategic Shift in the AI Chip Market

By Carlotta Kozlowskyj | 9 March 2026

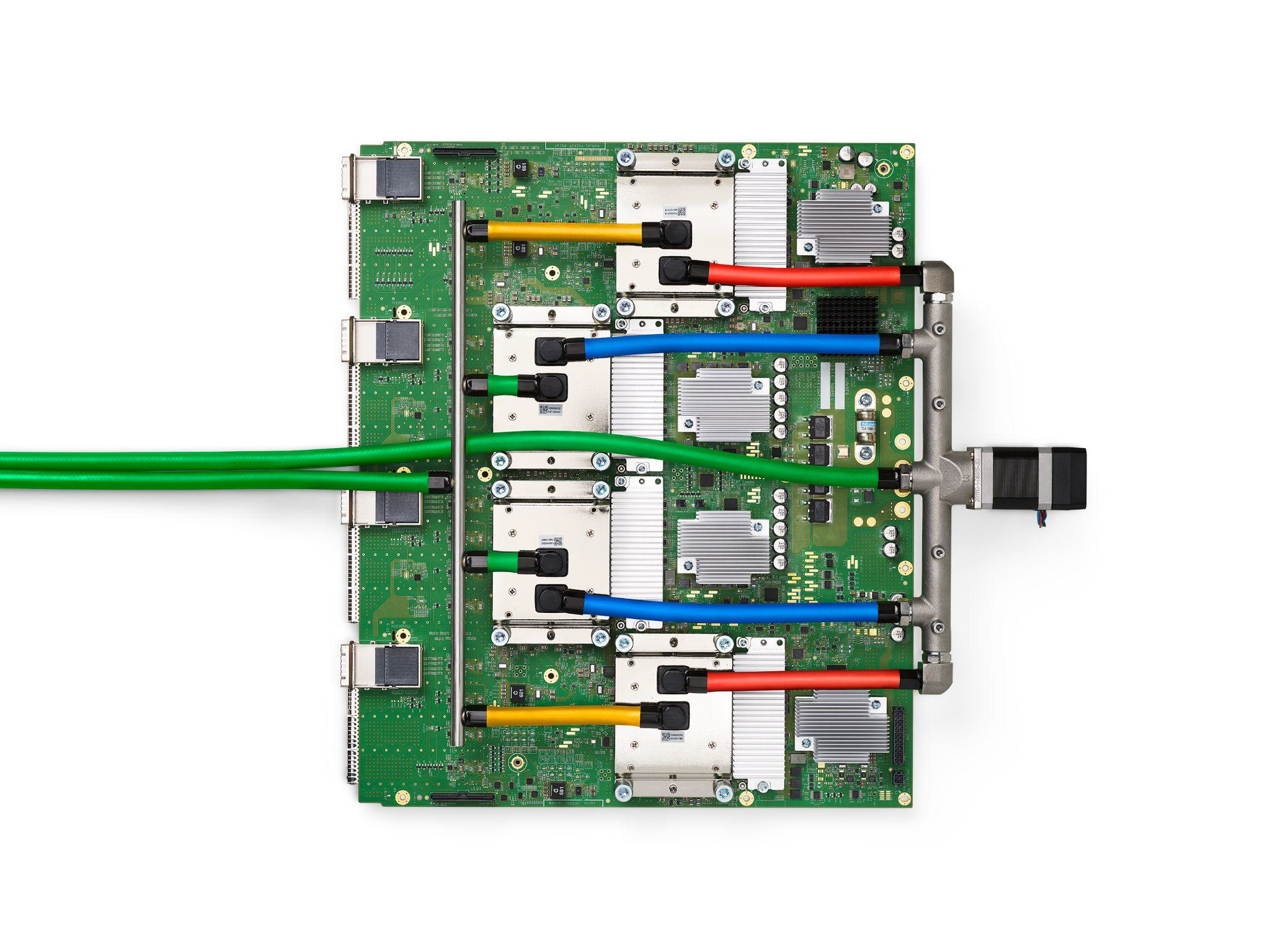

Cliff Young/Wikimedia Commons, CC BY 4.0

Summary

On 26 February 2026, Meta signed a multibillion-dollar deal with Google to gain access to its Tensor Processing Units (TPUs), marking a significant shift in its AI infrastructure strategy away from single supplier dependency on Nvidia.

This deal is significant to both companies, as it addresses Meta’s demand for AI compute at scale while positioning Google’s TPUs as a credible commercial alternative, fragmenting the chip market dominated by Nvidia.

In the short term, Nvidia will retain its dominance as the industry standard for AI training, but the increasing alternatives and market competition signal a long-term structural fragmentation of the AI chip market.

Context

On 26 February 2026, Meta Platforms signed a multibillion-dollar deal with Google to rent its artificial intelligence (AI) chips, known as TPUs, to develop and run its next generation of AI models. Under the terms of the arrangement, Meta will access Google’s data centres rather than purchasing physical chips, representing a cloud-based rent-before-buy approach. Reports indicate that Meta is also in early discussions to directly purchase TPUs for deployment in its own data centres as early as 2027. Google’s AI chips, TPUs, are a viable alternative to NVIDIA’s market-leading GPUs. This marks a significant challenge to Nvidia’s dominance in the AI chip market, with its H100 and B200 chips.

Google’s TPUs have been developed internally since 2015, originally reserved exclusively for Google’s own engineers working on products such as YouTube recommendations and other Gemini family AI models. The decision to open TPU access to external companies through Google Cloud marks a significant strategic shift for Google. This decision aligns with Meta’s multi-vendor infrastructure strategy to expand its AI infrastructure and diversify its chip supply. Meta signed a deal to buy current and future Nvidia GPUs, as well as a 5-year, USD 60b agreement to deploy AMD MI450 GPUs in its data centres, starting in the second half of 2026. Meta also continues to develop its own training chip, internally known as Artemis, designed to complement rather than replace external suppliers. Meta’s projected capital expenditure on AI infrastructure for 2026 is between USD 115b and USD 135b, nearly double the USD 72b spent the previous year, underscoring its ambitions.

Implications

The clearest winners from this deal are Meta and Google. For Meta, this new partnership directly addresses its demand for AI computing power, which consistently exceeds the available supply of Nvidia GPUs, while Nvidia’s pricing power on its H200 and B200 chips has created both supply and cost risks at scale. Renting Google TPUs offers Meta a lower cost and a complement to its existing AI infrastructure to match its AI workload. For Google, the deal validates the commercial viability of TPUs beyond its own internal structure, generating significant cloud revenue. This signals to the broader market that TPUs are a credible alternative to Nvidia’s AI training chips, a highly visible seal of approval.

The most immediate loser in this new transaction is inevitably the market leader, Nvidia. While it remains an overwhelmingly dominant position in the market, currently accounting for about 80% of the AI training chip market and a market capitalisation of over USD 3t, the emergence of viable alternatives accelerates market fragmentation. Nvidia responded rapidly to this new partnership by publicly asserting that its platform is “a generation ahead of the industry” and “the only platform that runs every AI model”, reflecting the strategic pressure it faces. Additionally, as previously reported by BISI, although the US Department of Commerce eased export restrictions on Nvidia H200 chips to China on 13 January 2026, as of late February, Nvidia has yet to confirm a single shipment. Consequently, Nvidia faces growing competition from Google TPUs and AMD, while also unable to access its most promising market.

This deal marks a growing willingness among major AI consumers to seek alternatives, even from rivals in other domains. Although Meta and Google are fierce competitors in digital advertising and AI assistance, they still agreed to a multibillion-dollar deal that blurs traditional competitive boundaries. This reflects the strategic imperatives for companies to respond to the demand for AI infrastructure.

One of the most significant implementation risks is the technical complexity of transitioning Meta’s AI workload, which runs on Nvidia GPUs, to Google’s TPUs using JAX and TensorFlow. Nvidia’s AI framework has become the standard for AI development, and transitioning away from it requires significant engineering effort in code, tools, and workflows.

Forecast

Short-term (Now - 3 months)

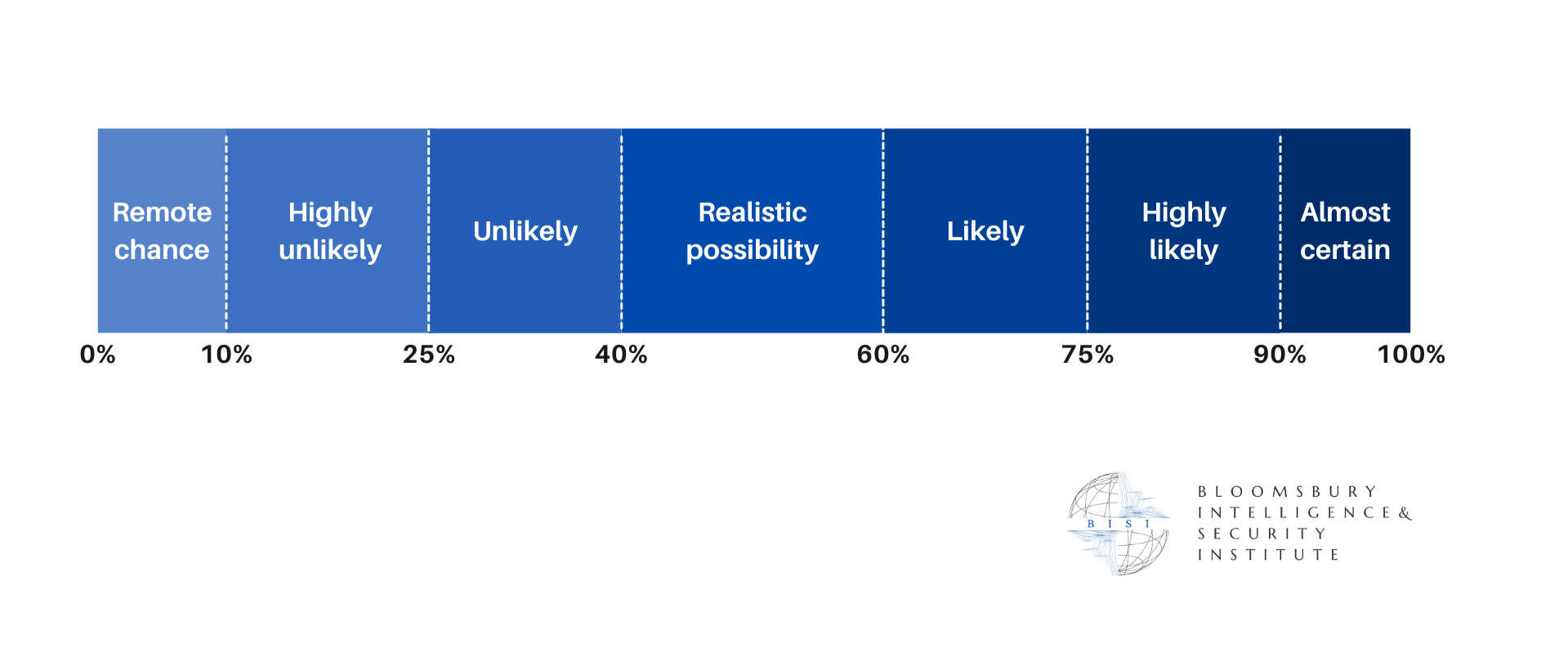

It is highly likely that Nvidia will remain the dominant AI chip provider, as its hardware performance is unmatched across general-purpose AI workloads.

It is highly likely that Google’s TPU commercialisation will accelerate, as its deal with Meta will encourage other companies such as Microsoft and Amazon to form partnerships.

It is likely that Meta’s chip transition will face technical friction and encounter engineering problems, delaying the operationalisation of the deal.

Long-term (>1 year)

It is likely that the AI chip market will further structurally fragment, significantly diminishing Nvidia’s AI training chip market shares as it faces increasing competition and alternatives.

It is likely that Meta’s rent-before-buy approach to Google’s TPUs will become a model for others to follow, allowing companies to test multiple chips before committing to large capital purchases.

It is likely that as Meta develops its own AI chips, Artemis, it will increasingly reduce its reliance on all external vendors for specific workloads.