Iran conflict as a testing ground for AI warfare systems

By Carlotta Kozlowskyj | 24 March 2026

Airman 1st Class William Rio Rosado/Wikimedia

Summary

The US-Israeli military campaign against Iran, launched on 28 February 2026, marks the first large-scale deployment of generative AI targeting systems against a sovereign state, effectively serving as a testing ground for algorithmic warfare.

The conflict reveals how AI systems are being used to overcome military constraints, raising fundamental questions about human oversight, the role of private technology companies in warfare and compliance with International Humanitarian Law (IHL).

The military use of AI targeting signals an irreversible shift towards automated battlefield decision-making that will challenge and reshape global security governance.

Background

The ongoing US-Israeli military campaign against Iran, launched on 28 February 2026, offers an unprecedented opportunity to assess the role and performance of AI-driven targeting systems at operational scale in a conventional interstate conflict. These systems were first deployed at scale by Israel in Gaza between 2023 and 2025, but the application differs fundamentally from their current deployment against a sovereign state with organised military infrastructure. Significantly, the US and Israel have relied on AI to identify and strike over 1,000 targets within the first 24 hours of their attack on Iran, and more than 2,000 in one week.

Methodology

The US relies on its Maven Smart System (MSS), an AI-driven data analysis platform built by Palantir Technologies and powered by Anthropic’s Claude AI. Maven provides real-time targeting for military operations, suggests precise location coordinates, recommends weaponry and reportedly evaluates the legal grounds for strikes under IHL, before presenting options to a human operator. However, on 4 March 2026, the Trump Administration designated Anthropic as a “supply chain risk” after it refused to allow unrestricted use of its technology for autonomous weapons. As a result of this dispute, the administration is moving to replace Claude with OpenAI.

Israel’s AI-targeting systems, developed by Unit 8200, centre on two complementary systems: the Gospel, which identifies infrastructure, and Lavender, which identifies suspected militants based on social networks and communications. These AI-driven targeting systems can analyse patterns in large volumes of surveillance data, such as satellite imagery and social networks, and generate recommendations for military strikes, significantly reducing human oversight. They were reportedly used to analyse the movement patterns of Iran’s senior leadership, contributing to the assassination of the Supreme Leader Ali Khamenei on 28 February 2026.

Implications

The Iran conflict illustrates how AI systems, unlike human analysis, can generate hundreds of targets within days, rather than dozens over months, resulting in a radical increase in the speed of the targeting process. AI is now being leveraged to overcome long-standing military constraints, such as the scarcity of target identification, marking a fundamental shift in how wars are planned and executed. The conflict also reflects a profound asymmetry in the conduct of warfare. On one side, the US and Israel are equipped with advanced AI-enabled precision targeting; on the other, Iran has responded primarily with volume-based drone and missile barrages lacking equivalent technology. However, this asymmetry is unlikely to persist as it will drive Iran and other adversaries to accelerate their own AI military development and programmes, a trend Russia and China, among others, are already responding to.

The conflict also emphasises the embeddedness of leading AI companies in modern warfare, most notably Palantir, Anthropic, AWS, and OpenAI, which are all crucial components of the US military targeting. In March 2025, NATO even signed a contract with Palantir to acquire its Maven Smart System in order to better support Allied Command Operations. This creates a new dynamic between governments and private companies, as the former is becoming increasingly reliant on the latter for their most sensitive military capabilities. At the same time, those companies face difficult ethical choices over how their technology is applied. The Anthropic case is particularly illustrative of this tension, as its refusal to lift ethical barriers led to its removal from a major government by other AI companies such as OpenAI.

Human rights organisations and legal scholars are also increasingly alarmed that the speed and scale of AI algorithmic targeting risk lowering the threshold for the use of force and undermining core principles of IHL, in particular the obligation to distinguish between combatants and civilians. Especially since AI systems rely on statistical inference and patterns, not legal certainty. There are also growing concerns about accountability and the lack of transparency about how much decision-making is being ceded to AI.

Forecast

Short-term (Now - 12 months)

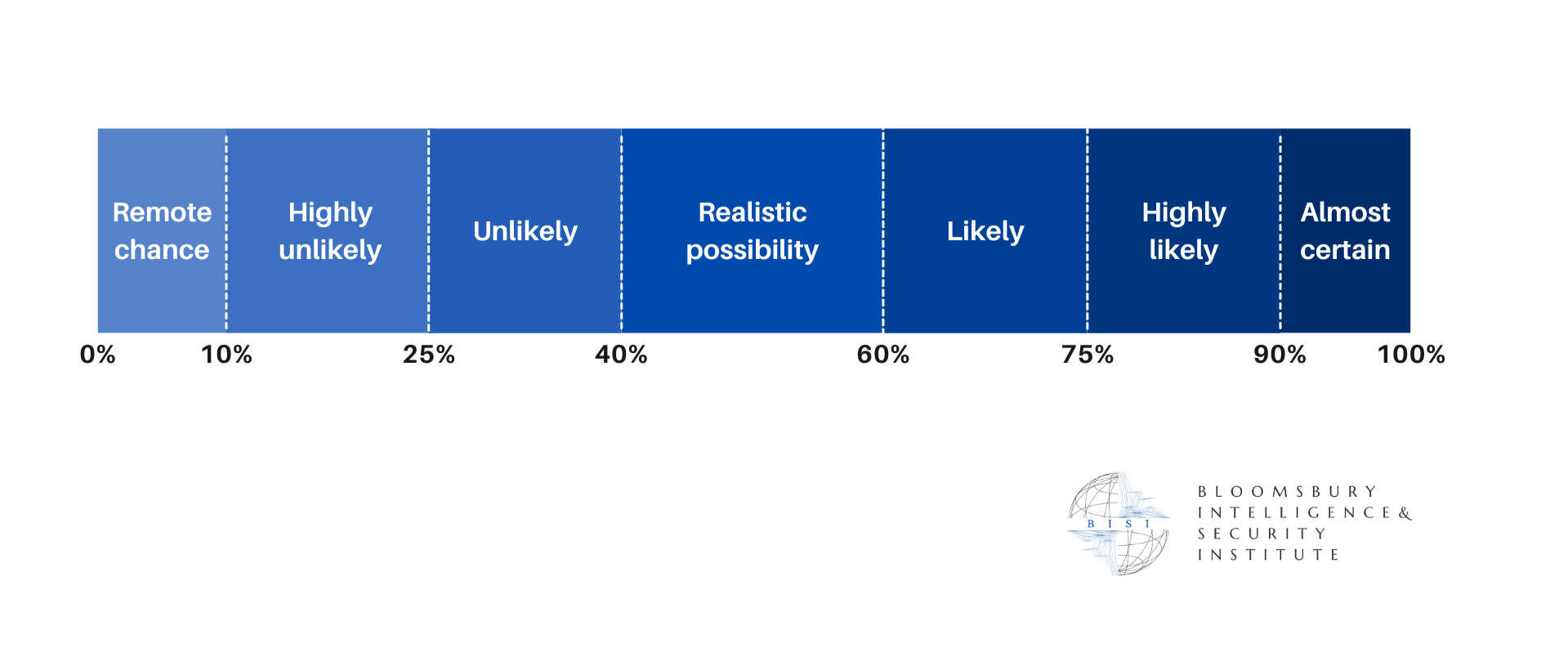

It is very likely that AI targeting will be adapted and refined based on lessons from Iran, improving system accuracy, error rates and expanding operational capabilities.

It is very likely that other major militaries, such as China and Russia, will accelerate their AI military systems programmes given their demonstrated strategic value.

It is likely that the tensions between the Trump Administration and private AI companies will intensify over ethical concerns.

Long-term (>1 year)

It is likely this conflict will accelerate the global race to automate the battlefield, as AI becomes the standard in advanced military conflicts.

There is a realistic possibility of even less human oversight in military decision-making, particularly in targeting and intelligence analysis.

It is very likely that International Humanitarian Law will face fundamental challenges from the widespread deployment of AI autonomous systems.