Gender Inequality In Digital Technologies And Online Violence

By Martyna Chmura | 15 March 2026

Summary

Women face two parallel digital realities: women experience harassment and deepfakes amplified by Artificial Intelligence (AI), while hundreds of millions remain excluded from meaningful digital access and skills.

Women also remain underrepresented in AI development and governance, increasing the likelihood that digital systems reproduce gender bias and structural inequalities.

As AI becomes embedded in labour markets, public services and information systems, governments and technology companies are likely to face growing pressure to treat gendered digital harms and exclusion as systemic governance risks.

Context

Digital technologies are frequently presented as tools for economic inclusion and gender equality. However, gender disparities in access and participation remain significant. According to the research, women in low- and middle-income countries are approximately 15% less likely than men to use mobile internet, leaving around 265m fewer women online globally. Women are also less likely to own smartphones, limiting access to digital education, financial services and labour markets.

At the same time, digital platforms increasingly expose women to targeted abuse. Research indicates that deepfake pornography accounted for approximately 98% of all deepfake videos online in 2023, with around 99% of the individuals targeted being women. Generative AI tools have lowered the cost and expertise required to produce manipulated images, audio and video, allowing harmful content to be produced and distributed at scale.

Women also remain underrepresented in the creation of these technologies. Women hold less than 27% of technology-related jobs globally, limiting their influence over how digital systems are designed and governed. In Europe, women account for roughly 19% of tech professionals, while in the United States (US) women hold about 27% of computing roles, with African American women and Hispanic American/Latina women representing only 3% and 2% respectively.

Implications

The interaction between AI, online violence and structural inequalities in digital access is reshaping how women experience digital technologies. While AI is often framed as a potential pathway to gender equality and economic empowerment, evidence shows women are disproportionately exposed to AI‑enabled harms and underrepresented among those who design and govern these systems. Across OECD countries, women remain a minority in ICT specialist roles and, at current rates, parity in the ICT workforce is many decades away. Globally, women hold only about 22% of AI‑related jobs, and they occupy just around 14% of senior executive roles.

Generative AI increases the scale and credibility of reputational attacks on women, with particularly acute consequences for those in public life. Synthetic media tools enable malicious actors to fabricate sexual or defamatory content cheaply and distribute it rapidly across platforms. In the 118th US Congress, 26 female members were identified as victims of sexually explicit deepfakes, with women 70 times more likely than men to be targeted; around one in six congresswomen had been subjected to non‑consensual intimate imagery. Similar cases have emerged internationally: Northern Ireland politician Cara Hunter and Italian Prime Minister Giorgia Meloni have both faced pornographic deepfake attacks, illustrating how synthetic media can be weaponised to intimidate or discredit women in leadership.

These attacks carry systemic political risks. UN bodies and independent researchers find that technology‑facilitated violence, including deepfakes and gendered disinformation, is a key factor in women’s decisions to self‑censor, withdraw from social media or avoid standing for office. When coordinated harassment targets female candidates, journalists or human‑rights defenders, it shapes political discourse by driving women out of the conversation rather than contesting their ideas, undermining inclusive governance and the quality of democratic debate. The Stimson Center warns that AI‑enabled violence against women and girls is approaching a “tipping point” where online hostility becomes a structural barrier to women’s participation and a threat to democratic resilience itself.

At the same time, unequal participation in the technology sector limits women’s influence over how AI is built and regulated. OECD and UNDP analyses show that women are under‑represented in ICT and AI education pipelines, in technical roles, and in national AI‑strategy processes, even as algorithmic systems are deployed in areas such as hiring, credit scoring and public‑service delivery. Systems trained on biased data can reproduce stereotypes and discrimination, especially when designers are not trained to recognise gendered risks.

This combination of higher exposure to harm and lower influence over governance creates a structural tension at the heart of the digital economy. Governments and international organisations routinely promote AI and digital transformation as tools for advancing the Sustainable Development Goals, including gender equality and economic inclusion. Yet reports show that gender considerations remain weakly integrated into AI policy analysis and governance frameworks.

As AI becomes embedded in labour markets, electoral processes and information ecosystems, addressing gender inequality in digital technologies is therefore not just a social policy concern but a political and economic imperative that will shape who feels safe to run for office, who is heard in public debate, and whose interests define the rules of the emerging digital order

Forecast

Short-term (Now - 3 months)

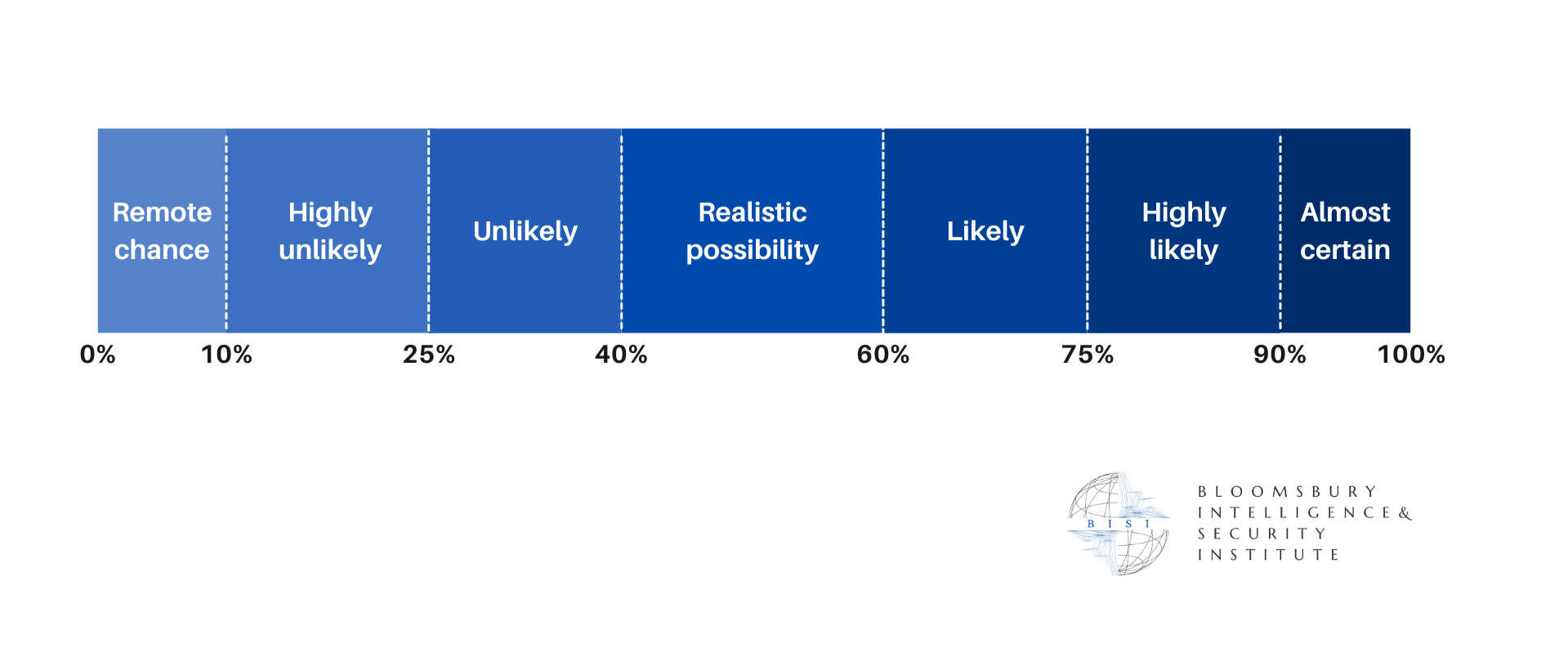

Regulators and technology companies are likely to increase attention to gender-based online violence in debates on deepfake regulation and platform safety measures.

Technology platforms are likely to expand reporting mechanisms and moderation tools targeting non-consensual synthetic imagery.

Medium-term (3 - 12 months)

More jurisdictions are likely to introduce legislation criminalising non-consensual deepfakes and strengthening platform obligations to remove abusive content.

There is a realistic possibility that policymakers and international organisations will begin integrating gender considerations into AI governance frameworks, including guidance on algorithmic bias, digital violence and gender-responsive risk assessments.

Long-term (>1 year)

Gendered online violence and algorithmic bias will likely become recognised systemic risks in digital governance frameworks, with regulators requiring stronger transparency, auditing and safety mechanisms for AI systems used in employment, finance and public services.

It is unlikely that the global gender digital divide will close in the near term. Without sustained investment in connectivity, digital education and women’s participation in technology sectors, structural gaps in digital access and AI skills are likely to persist for decades, reinforcing inequalities in labour markets, political participation and technological governance.