Claude Mythos and the Acceleration of Cybersecurity Risk

By Martyna Chmura | 28 April 2026

Summary

On 7 April 2026, Anthropic announced Claude Mythos Preview but withheld it from commercial release, instead launching Project Glasswing for defensive use.

Mythos is likely to increase cyber risk by compressing the time between vulnerability discovery and exploitation.

Over the next months, exploitation of known but unpatched vulnerabilities in widely used software is likely to increase, raising the risk of large-scale service disruption and data breaches across critical infrastructure sectors.

Context

On 7 April 2026, Anthropic announced Claude Mythos Preview, a frontier AI model with advanced coding and reasoning capabilities, alongside Project Glasswing, a coordinated initiative to deploy the model defensively across critical software ecosystems. Anthropic did not release Mythos commercially, arguing that frontier models had reached a point where they could “surpass all but the most skilled humans at finding and exploiting software vulnerabilities” and warning that wider access could have severe consequences for “economies, public safety, and national security”.

The decision followed internal and external evaluations indicating a marked increase in cyber capability. Anthropic reported that Mythos had identified thousands of high-severity vulnerabilities, including flaws in major operating systems and web browsers. Among the disclosed cases was a 27-year-old vulnerability in OpenBSD that had persisted despite extensive human review and repeated automated testing.

Anthropic has sought to channel these capabilities into defensive use. Project Glasswing includes major technology and security firms such as Amazon Web Services, Apple, Cisco, CrowdStrike, Google, Microsoft and Palo Alto Networks, and Anthropic has committed up to USD 100m in usage credits, alongside direct support for open-source security efforts. Early deployment suggests practical impact in real-world environments. In collaboration with Mozilla, Anthropic reported that its models identified multiple vulnerabilities in Firefox, several of which were classified as high severity and subsequently patched.

Implications

Claude Mythos is significant because it changes the economics of cyber operations, not simply the quality of offensive tooling. The key shift is that vulnerability discovery and exploit development are becoming cheaper, faster and less dependent on scarce human expertise. Independent assessment by the AI Security Institute (AISI) characterised Mythos as a step change over earlier frontier models. For example, it was found that Mythos could autonomously execute multi-stage attacks on vulnerable networks and is the first model to solve the 32-step “The Last Ones” takeover simulation. It also achieved a 73% success rate on expert-level capture-the-flag tasks. This suggests that frontier models are moving from narrow assistance to operationally relevant cyber capability.

The immediate implication is that the constraint in cybersecurity is shifting from detection to remediation. Anthropic’s Mozilla partnership showed that AI-assisted analysis could generate large volumes of credible findings in a short period, including 22 Firefox vulnerabilities in two weeks, 14 of which Mozilla classified as high severity. This matters because the ability to respond depends on how organisations manage and fix these issues, not just on finding them. Software developers and system operators must still check each vulnerability, decide how urgent it is, develop a fix, and distribute updates to users. As AI increases the rate of discovery, the stock of known but unpatched vulnerabilities is likely to grow, especially in open-source and legacy environments.

This dynamic is likely to favour attackers in the near term because cyber operations are inherently asymmetric. Attackers need only one successful point of entry, while defenders must secure entire systems and continuously patch weaknesses. Anthropic reports that Mythos has already identified thousands of high-severity vulnerabilities across major operating systems and web browsers, including a 16-year-old FFmpeg flaw that survived extensive review and 5m automated tests. The implication is not simply more vulnerabilities, but a higher probability that widely shared software dependencies become exploitable at scale.

Banking systems, healthcare networks, logistics platforms, power grids and government systems all rely on software ecosystems with common dependencies and uneven patching capacity. Project Glasswing explicitly frames these sectors as part of the shared cyberattack surface. With cybercrime already estimated at around USD 500b annually, even modest AI-enabled gains in attacker efficiency could further generate substantial losses.

Its value lies in concentrating advanced capability among trusted defenders and buying time through accelerated scanning and patching. Its weakness is that it does not solve the underlying diffusion problem, especially since Anthropic itself noted that securing critical infrastructure may take years, while frontier AI capabilities are advancing over months. The most likely outcome is therefore a temporary defensive advantage for well-resourced actors as opposed to a stable restoration of control.

Forecast

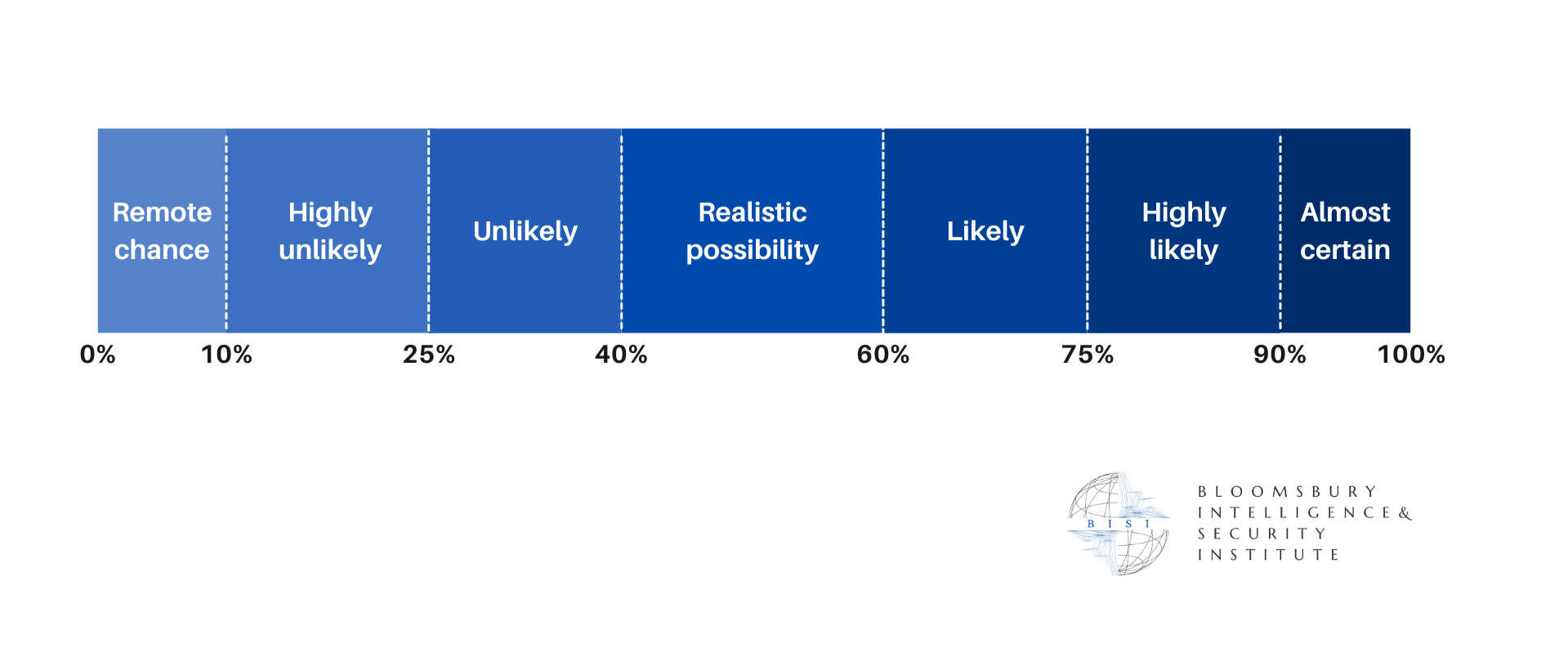

Short-term (Now - 3 months)

Rapid increase in vulnerability identification across critical and widely used software is highly likely, driven by restricted deployment of Mythos-class models within initiatives such as Project Glasswing.

Remediation bottlenecks are likely, as organisations struggle to triage and patch vulnerabilities at the pace they are discovered, particularly in open-source and legacy systems.

There is a realistic possibility that there will not be any immediate surge in large-scale AI-driven cyberattacks as access to frontier models remains restricted to trusted actors.

Medium-term (3 - 12 months)

There is a realistic possibility of increased exploitation of known but unpatched vulnerabilities, particularly in enterprise software, open-source dependencies, and infrastructure systems with slower patch cycles.

Emergence of comparable capabilities across other AI developers is likely, reducing the effectiveness of restricted release strategies and increasing the availability of advanced cyber capabilities.

Greater involvement of non-state actors in sophisticated cyber operations is likely, as AI lowers the expertise required to execute multi-stage attacks.

Long-term (>1 year)

A sustained increase in baseline cyber risk is highly likely, as AI capabilities diffuse and lower barriers to conducting high-impact cyber operations.

A more contested and unstable cyber environment is likely, characterised by continuous escalation between offensive and defensive AI systems.

Partial stabilisation driven by large-scale defensive adoption of AI is likely, but uneven across sectors and geographies, reinforcing disparities between well-resourced and less-resourced actors